Student Computing Research Symposium is an annual research conference jointly organized by University of Ljubljana, University of Maribor and University of Primorska. Its goal is to encourage students to present and publish their research work in the domain of computer science and to facilitate cooperation and creativity.

Submission Deadline

Author Notification

Camera-Ready Paper

Conference Starts

We invite both B.Sc. and M.Sc. students to submit research papers from all fields of computer science. The length of the paper is limited to 4 pages. M.Sc. students must write their papers in English, while B.Sc. students may choose either English or Slovene. If your paper passes the acceptance threshold, it will be designated either for oral presentation or for poster presentation.

Having a paper presented at a conference and published in the conference proceedings is a reward in itself. Nevertheless, the authors of best papers, posters and/or presentations will receive additional awards contributed by the organizers and our industry partners.

The conference program is available here.

10th SCORES, University of Maribor, Faculty of Electrical Engineering

and Computer Science, Maribor, Slovenia, 3 October

2024

9th SCORES, University of Primorska, Faculty of Mathematics, Natural

Sciences and Information Technologies, Koper, Slovenia, 5 October

2023

8th SCORES, University of Ljubljana, Faculty of Computer and Information

Science, Ljubljana, Slovenia, 6 October 2022

7th StuCoSReC, University of Maribor, Faculty of Electrical Engineering

and Computer Science, Maribor, Slovenia, 14 September 2021

6th StuCoSReC, University of Primorska, Faculty of Mathematics,

Natural Sciences and Information Technologies, Koper, Slovenia, 10

October 2019

5th StuCoSReC, University of Ljubljana, Faculty of Computer and

Information Science, Ljubljana, Slovenia, 9 October 2018

4th StuCoSReC, University of Maribor, Faculty of Electrical Engineering

and Computer Science, Maribor, Slovenia, 11 October 2017

3rd StuCoSReC, University of Primorska, Faculty of Mathematics,

Natural Sciences and Information Technologies, Koper, Slovenia, 12

October 2016

2nd StuCoSReC, Jožef Stefan International

Postgraduate School, Ljubljana, Slovenia, 6 October 2015

1st StuCoSReC, University of Maribor, Faculty of Electrical Engineering

and Computer Science, Maribor, Slovenia, 7 October 2014

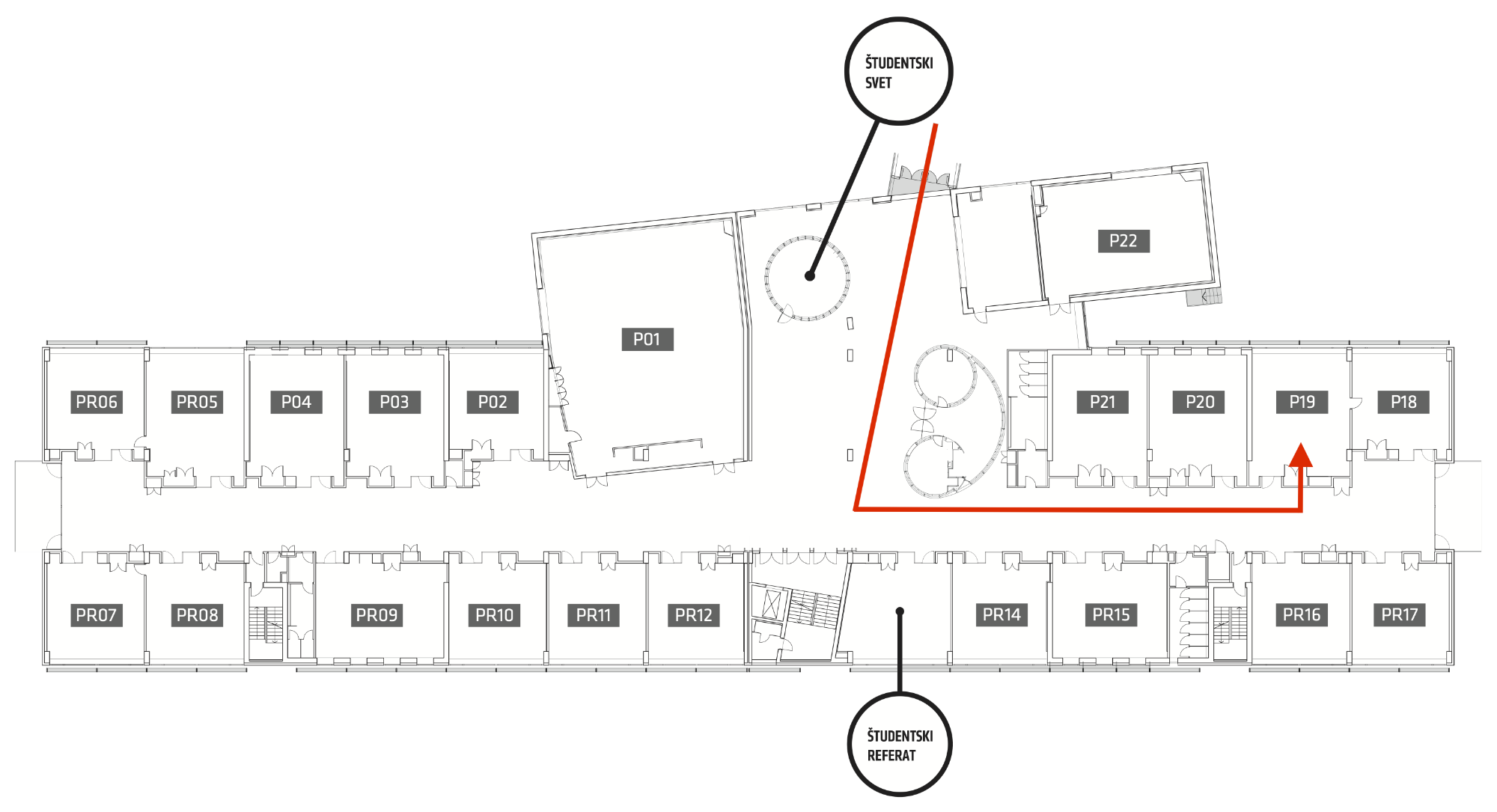

The conference will take place at the Faculty of Computer and Information Science of University of Ljubljana, Večna pot 113, Ljubljana, Slovenia. The event will be held in the lecture room 18-19 (see the image below).